This is Part 2 of a two-part series. Part 1 addressed the risks and restrictions organizations face in deploying artificial intelligence (AI) and the key elements of an AI strategy.[1] This part details how to develop an AI governance function.

Steps in creating an AI governance function

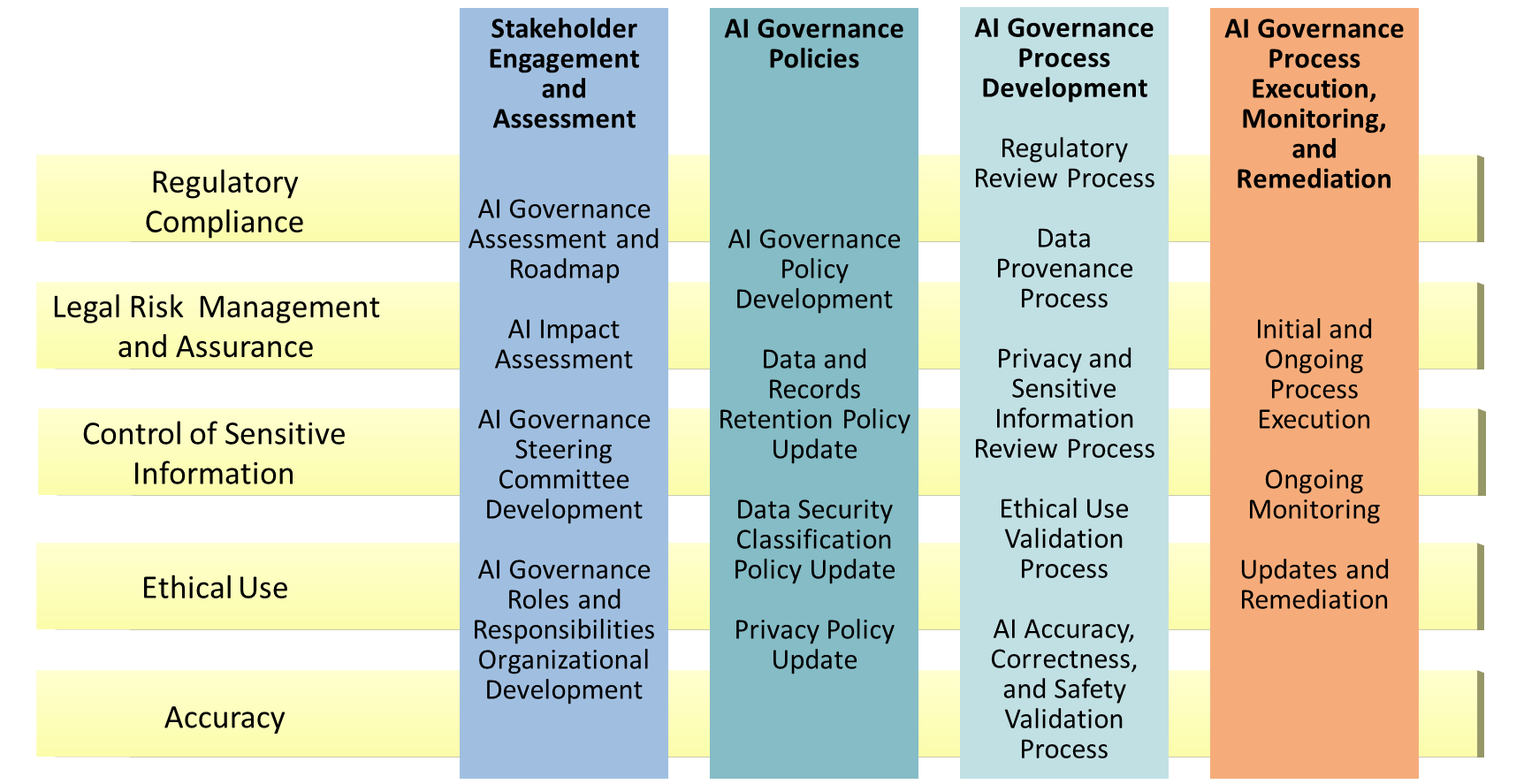

The breadth of AI governance requirements can be overwhelming. Instead of addressing everything at once, break program development into a series of steps (see Figure 1).

Stakeholder engagement and assessment

While it may be tempting to try and develop a program with a small group of stakeholders, this may slow down or even halt program development. As a first step, needs should be assessed and socialized with a larger group of stakeholders early in the process.

Assessment and roadmap

Assess current and targeted compliance and risk requirements, engaging key stakeholders in the process. Develop a comprehensive AI governance roadmap reflecting the steps necessary to reach your targeted maturity level. Ideally, your AI governance roadmap will mirror AI application development steps, encouraging governance to be built into AI applications—not as an afterthought. Determining what needs to be done and at what level can speed up these types of complex projects.

Impact assessment

Some jurisdictions may specifically require an impact assessment focusing on people and organizations. This impact assessment should be borrowed from other elements in this step.

Steering committee

AI governance is complex, requiring the participation of a variety of stakeholders, including compliance, risk, legal, privacy, information governance, data governance, IT, and even business functions. A cross-functional steering committee is the most effective model for managing governance on an ongoing basis. This committee should be formed early in the process to ensure both that all risks and requirements are covered and that each group feels a sense of “buy-in” to the process.

Roles and responsibilities

Many AI governance functions will require participation from different groups. Establishing ongoing roles, and responsibilities as well as organizational design, ensures that AI governance will be an ongoing, continual process and not a one-time exercise.

Many AI initiatives start and are developed within IT and have little interaction with other stakeholders until the system is ready for deployment, often leading to delays when it needs to be vetted or redesigned. Compliance professionals and other stakeholders should engage with IT early, offering to work with them to help navigate these complex environments, explaining that designing governance into a system is much faster and easier than trying to retrofit it on the backend.